Seismic Data Conditioning

Seismic Data Conditioning

Introduction

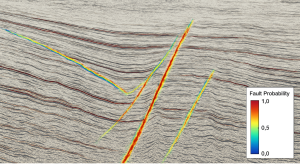

Seismic data conditioning prepares seismic volumes for interpretation and AI workflows. Clean, consistent, and properly scaled data ensures that attributes, AVO, inversion, and machine‑learning models produce reliable geological results. Conditioning is the foundation of all quantitative seismic analysis.

1. Techniques

Seismic data conditioning includes several key processes:

• Filtering

Removes unwanted frequencies, noise, and artifacts.

• Scaling

Balances amplitudes across traces or gathers to ensure consistency.

• De‑noising

Reduces random noise while preserving geological signal.

• Trace Editing

Removes bad traces, spikes, and acquisition artifacts.

• Phase Correction

Ensures seismic data has consistent and interpretable phase, critical for well ties and inversion.

These steps improve data quality and prepare seismic for advanced analysis.

2. Applications

Conditioned seismic data supports a wide range of interpretation and quantitative workflows:

• Attribute Enhancement

Cleaner data produces clearer coherence, curvature, and spectral attributes.

• AVO Analysis

AVO requires stable, amplitude‑preserved gathers.

• Inversion

Accurate impedance and elastic properties depend on well‑conditioned input.

• Machine Learning

AI models perform best when trained on consistent, noise‑reduced data.

Data conditioning directly impacts the reliability of downstream results.

Conclusion

Seismic data conditioning ensures that seismic volumes are clean, consistent, and ready for analysis. Whether for attributes, AVO, inversion, or machine learning, high‑quality input data leads to more accurate geological interpretation and reduced uncertainty.