Seismic Processing — A Complete Overview

Seismic Processing — A Complete Overview

Introduction

Seismic processing is the backbone of modern subsurface imaging. It transforms raw field recordings — often noisy, irregular, and full of artifacts — into coherent seismic volumes that geoscientists can interpret with confidence. Without seismic processing, the subsurface would remain a blur of unorganized reflections. With it, we gain detailed structural and stratigraphic insight that guides exploration, development, and reservoir management.

This article walks through the seismic processing workflow, explains the purpose of each stage, and highlights the value it brings to geoscience teams.

1. What Is Seismic Processing?

Seismic processing is the sequence of computational steps applied to raw seismic data to enhance signal quality, suppress noise, correct for acquisition geometry, and reposition reflections into their true subsurface locations. It bridges the gap between field acquisition and interpretation.

Typical workflow stages include:

Data ingestion

Data conditioning

Velocity analysis

Deconvolution

Multiple attenuation

Migration

Stacking

QC and deliverables

Each stage builds on the previous one, gradually improving the clarity and accuracy of the seismic image.

2. Why Seismic Processing Matters

Seismic processing directly influences interpretation quality. Poorly processed data can lead to:

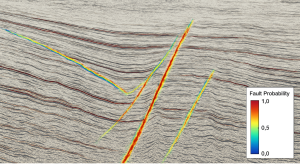

Misplaced faults

Incorrect horizon picks

Misleading amplitudes

Faulted velocity models

Drilling risk

High‑quality processing delivers:

✔ Clearer structural definition

Faults, folds, and stratigraphic features become easier to interpret.

✔ Better reservoir characterization

Attributes and inversion workflows rely on clean, consistent data.

✔ Reduced uncertainty

Accurate imaging supports better drilling decisions.

✔ Improved signal‑to‑noise ratio

Noise suppression reveals subtle geological features.

3. The Seismic Processing Workflow

Below is the full workflow, aligned with the table you added to your site.

Stage 1: Data Ingestion

Processing begins by loading:

Raw field data (shot gathers)

Navigation files

Observer logs

Metadata

Geometry information

Key output: Verified input dataset.

Stage 2: Data Conditioning

Data conditioning prepares the dataset for advanced processing. It includes:

Noise filtering

Trace editing

Amplitude scaling

Bandpass filtering

Deblending (if needed)

Key output: Cleaned, QC’d gathers.

Stage 3: Velocity Analysis

Velocity is the heart of seismic imaging. Analysts use:

Semblance panels

Velocity spectra

Tomography

Horizon‑guided updates

Key output: Initial velocity model.

Stage 4: Deconvolution

Deconvolution sharpens the seismic wavelet by removing source signature effects and reverberations.

Key output: Sharper seismic wavelet.

Stage 5: Multiple Attenuation

Multiples — unwanted repeated reflections — can obscure true geology. Techniques include:

SRME

Radon demultiple

Model‑based prediction

Wave‑equation demultiple

Key output: Reduced multiple energy.

Stage 6: Migration

Migration repositions seismic events into their correct subsurface locations. Methods include:

Kirchhoff migration

Beam migration

Reverse Time Migration (RTM)

Depth migration

Key output: Migrated seismic volume.

Stage 7: Stacking

Stacking sums traces to improve signal‑to‑noise ratio and produce a coherent section.

Key output: Final stacked section.

Stage 8: QC & Deliverables

Final steps include:

Visual QC

Attribute checks

Amplitude validation

Exporting SEGY volumes

Delivering velocity models and reports

Key output: Final SEGY, velocity model, QC package.

4. The Value of Modern Seismic Processing

Today’s workflows integrate:

Machine learning

Broadband techniques

Full waveform inversion

High‑performance computing

Advanced noise suppression

These innovations deliver clearer images, deeper penetration, and more reliable interpretation.

Conclusion

Seismic processing is essential for transforming raw field data into actionable geological insight. By following a structured workflow and leveraging modern algorithms, geoscientists can produce high‑quality seismic volumes that support exploration, development, and reservoir management.